In theory, predictive policing could make law enforcement fairer and more efficient. In practice, this very much depends on a number of things, such as whether predictive policing uses data that was collected in an unbiased way, which is often not the case. Because of this, it is not uncommon to see predictive policing merely reinforcing and amplifying prejudices that have informed policing in the past, leading to problems like continued over-policing of certain minority communities.

What is predictive policing?

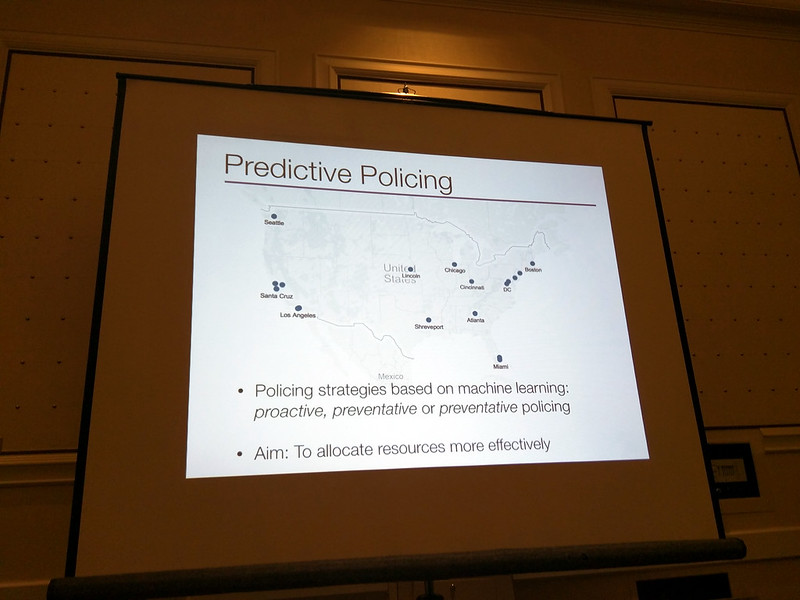

Predictive policing refers to the use of predictive analytics based on mathematical models, and other analytical techniques in law enforcement to identify potential criminal activity. Predictive policing therefore uses computer systems to analyze large sets of data to help decide where to deploy police or to identify individuals who are purportedly more likely to commit or be a victim of a crime.

Broadly speaking, there are two applications of predictive policing. First, the use of arrest data to predict geographical crime hotspots. Second, mined social media data that can be used to determine how likely it is that someone might commit a crime. Unforeseen amounts of publicly available personal information open up possibilities for more intrusive forms of predictive policing. Not only does the wide availability of facial images enable more intrusive uses of CCTV cameras, but data on online behaviour might lead to individual profiling and risk assessments.

The benefits

1. Crime prevention

You may have heard that predictive policing is very efficient in preventing crime. Indeed, a number of studies seem to support this claim. For example, the implementation of predictive policing in Santa Cruz (California) over a six-month period appears to have resulted in a 19% reduction of burglaries (as well as two dozen arrests). Using an algorithm, the system employed verified crime data to predict future offenses in 500-square-foot (about 46.5 square metres) locations. The police would then be provided with “hot spot” maps, which indicated the high-risk locations. Officers would pass through these areas when they were not obliged to address other calls. No one dispatched or required them to patrol the sites; they did it as part of their routine extra checks.

The Los Angeles Police Department (LAPD) tested the method in the context of LA’s much larger population and more complex patrol needs. The department distributed maps to officers at the beginning of roll call, much like in Santa Cruz. However, some maps were produced using traditional LAPD hot spot methods, while others were created by the algorithm. The officers were not told where the maps came from. The algorithm provided twice the accuracy that the LAPD’s current practices produced. While property crime was up .4 percent throughout Los Angeles, in the Foothills, the areas where they used the algorithm, it declined by 12 percent.

2. Informed decision-making

Computer data analytics provide an abundance of information. According to its supporters, predictive policing could lead to more objective decision-making, discouraging police officers from making arbitrary decisions that might be based on bias rather than evidence. This was the view of the US Attorney General, who argued that data-driven policing is potentially groundbreaking. By introducing the use of predictive technologies, the Attorney General was able to take Camden, New Jersey, off the top of the list as the most dangerous city in America. They reduced murders there by 41 percent, and all crime in the city by 26 percent. Most importantly, they went from doing low-level drug crimes that were outside the police department building to doing cases of statewide importance, on things like reducing violence with the most violent offenders, prosecuting street gangs, gun and drug trafficking, and political corruption.

3. Advancement of the criminal justice system

Predictive policing has the potential to make policing fairer. By promoting decision-making based on objective evidence, predictive policing could potentially alleviate certain discrepancies in the enforcement of the law. When the algorithm compiled the crime hot spot map in the above-mentioned LA study, it did not directly rely on prejudice. By contrast, the traditional LAPD hot spot maps were produced by (inevitably prejudiced) humans. As a result, algorithms might be able to help police officers to better predict risks, determine the identity of offenders, and identify the vulnerabilities of a community and its members. However, this potential can only be realised if the algorithm is indeed free of bias, which is not always the case, as we discuss below.

4. The progressive uses of predictive policing

Data has the potential to be a force for good. For example, predictive technologies could be used to provide early warning of harmful patterns of police behaviour. Indeed, police departments could use data analytics as a tool to anticipate officer misconduct. Experience in Chicago and elsewhere shows that police misconduct follows clear and consistent patterns, and that training and support of at-risk officers can help avert incidents of such misconduct. Similarly, predictive policing systems could be deployed to assess whether a given law enforcement agency is likely to treat different neighbourhoods or people of different ethnicities similarly. This could help make police aware of any biases that might be damaging the trust of the public or wasting resources.

The drawbacks

1. Privacy concerns

As discussed, predictive policing has two applications: the use of geographic arrest data to inform how police forces deploy officers, and the use of data about individuals gathered from the internet, such as social media accounts and CCTV footage, to predict the likelihood that an individual will commit a crime. The first of these, assuming it’s based on anonymised arrest data, probably doesn’t pose risks to privacy. But the second of these - mining publicly available information and CCTV footage - clearly does pose serious risks to privacy.

Some data might be too personal to be stored, and those in control of it might lack the capability and professionalism to keep it secure. Information collected and stored by a police department is liable to being leaked, especially as data security is costly in terms of training and personnel. Considering the sensitive nature of such information, the possibility of data leaks is particularly alarming. For example, would you be comfortable with the police holding data about what you did last weekend? Probably not. Especially if you consider that the data could leak, with potentially damaging effects on your private and professional life.

The digital environment and, in particular, the ready availability of much personal data on social media intensifies these concerns. A study explores the collection of data for law enforcement, highlighting the controversies linked to the increasingly important role social media data plays in data-driven policing. Because many social network users fail to perceive their digital environment as public, search engines and other automated analytics greatly enhance the potential for state surveillance. Worryingly, the law does not curtail social media intelligence and its use by law enforcement and security authorities. The consequences will be increasingly significant as more and more personal data is disclosed and collected in public without well-defined expectations of privacy. As data protection lawyer Alan Dahi maintains, “Just because something is online, it does not mean it is a fair game to be appropriated by others in any way they want to -- neither morally, nor legally.”

Aside from privacy concerns, if personal data is used to predict an individual’s proclivity to commit crime, the presumption of innocence - whereby everyone is considered, and treated as, innocent until proven guilty - is undermined.

2. Lack of accuracy

The reliability of predictive policing depends on the quality of the data and the integrity of its implementers and users. A report observes that the practice is not a crystal ball that can accurately predict the future.

When it comes to using data to predict crime hot spots, decades of criminology research show that crime reports and other statistics gathered by the police primarily document law enforcement’s response to the reports they receive and situations they encounter, rather than providing an objective or complete record of all the crimes that occur. Put otherwise, the databases tell us where crime has been spotted by the police in the past. But we know that in many countries the police dedicate disproportionate resources targeting certain minority groups. The result is that racist practices get baked into the data. Predictive policing then becomes a vicious circle. Crime statistics collected on the basis of a racist policy will create racist predictions, leading to over-policing which will continue to generate misleading data and racist predictions.

When it comes to mining data about particular individuals to gauge the likelihood that they may commit a crime, it is well known that online activity does not accurately represent human behavior in the real world. While the idea that predictive policing could in the future be used to arrest someone before they commit a crime might seem far-fetched, the notion that data-driven predictive technologies justify heightening surveillance measures in relation to supposedly high-risk groups and individuals, where the assessment that they are high-risk is dubious, is not far off reality.

3. Discrimination

Another drawback of predictive policing is that it can produce biased results. The ACLU criticised the practice for its tendency to perpetuate racial profiling. When fed with biased data, a key limitation of any algorithm is that it magnifies biases emerging from conventional processes, further intensifying unwarranted discrepancies in enforcement.

Consider the example of two teenagers who both regularly smoke marijuana. Teenager A lives in a neighbourhood with lower recorded levels of crime, whereas teenager B lives in an area with higher recorded levels of crime. By focusing on the area with the highest predicted crime risk, A and B will become, respectively, under- and over-policed. Teenager B is therefore more likely than teenager A to end up in jail. At the individual level, the asymmetry damages B’s prospects, because having a criminal record will negatively impact their access to careers and education. At a systemic level, this dynamic might undermine vital goals of policing, such as building community trust. Current systems are thus blind to their impact in these areas, and may do unnoticed harm. Predictive policing systems exacerbate this trend by making policing merely about the numeric reduction of the rates of detected, rather than actual, crime.

4. Accountability

Predictive policing reduces the accountability of law enforcement. As most processes in data analytics are automated, it might undermine the ability of officers and departments to explain and justify their decisions in a meaningful way. Further, because of the complexity and secrecy of these tools, police and communities currently have limited capacity to assess the risks of biased data or faulty prediction systems.

To summarize, while predictive policing carries the promise of efficient and fair policing, in reality it is both inaccurate in its predictions and poses serious challenges to creating a freer and fairer society. Predictive policing technologies are likely to gain traction in the coming years, because they are cheaper than conventional policing practices. However, the current legal framework applicable to these technologies is unclear and unhelpful. This must change. EU Member States should query whether we should be building these systems at all. The hunt for fair and transparent algorithmic decision-making might be insufficient to address the issues they raise.